Scaling Cross-Embodiment World Models

for Dexterous Manipulation

Abstract

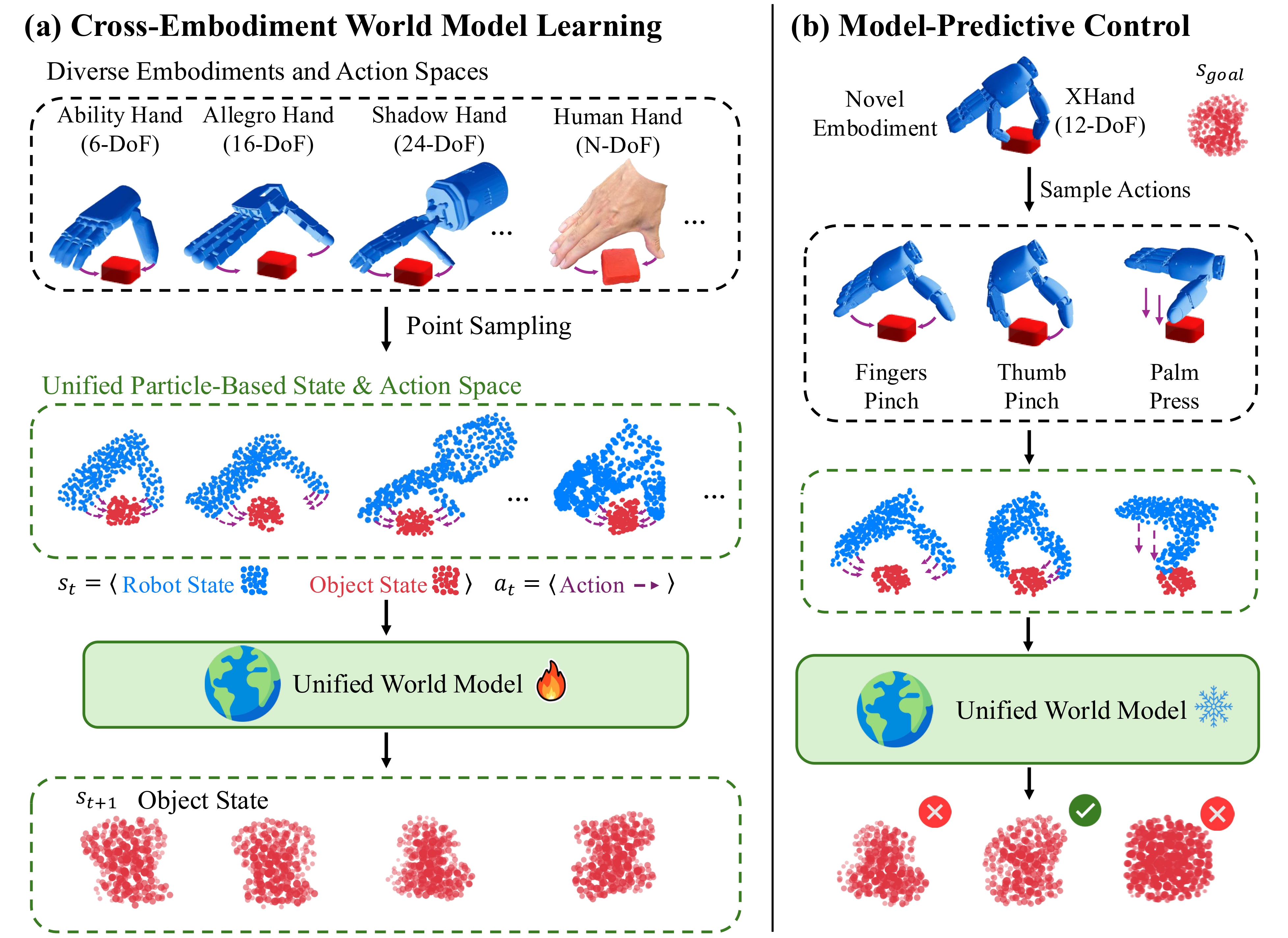

Cross-embodiment learning seeks to build generalist robots that operate across diverse morphologies, but differences in action spaces and kinematics hinder data sharing and policy transfer. This raises a central question: Is there any invariance that allows actions to transfer across embodiments? We conjecture that environment dynamics are embodiment-invariant, and that world models capturing these dynamics can provide a unified interface across embodiments. To learn such a unified world model, the crucial step is to design state and action representations that abstract away embodiment-specific details while preserving control relevance. To this end, we represent different embodiments (e.g., human hands and robot hands) as sets of 3D particles and define actions as particle displacements, creating a shared representation for heterogeneous data and control problems. A graph-based world model is then trained on exploration data from diverse simulated robot hands and real human hands, and integrated with model-based planning for deployment on novel hardware. Experiments on rigid and deformable manipulation tasks reveal three findings: (i) scaling to more training embodiments improves generalization to unseen ones, (ii) co-training on both simulated and real data outperforms training on either alone, and (iii) the learned models enable effective control on robots with varied degrees of freedom. These results establish world models as a promising interface for cross-embodiment dexterous manipulation.

Method

Our approach addresses the challenge of transferring manipulation skills across different robotic embodiments by introducing a unified particle-based representation. Key components include:

- Particle Representation: Both robot hands, human hands, and objects are represented as sets of 3D particles, creating a shared state and action space across embodiments.

- Action Encoding: Actions are expressed as particle displacement fields, enabling a consistent action representation regardless of the specific embodiment's degrees of freedom.

- World Model: A particle-based graph neural network (DPI-Net) serves as the world model, leveraging graph structure to model interactions between particles and incorporating strong inductive biases for manipulation tasks.

- Zero-Shot Transfer: The learned world model generalizes to diverse real robot hands with different kinematic structures without additional training.

Results

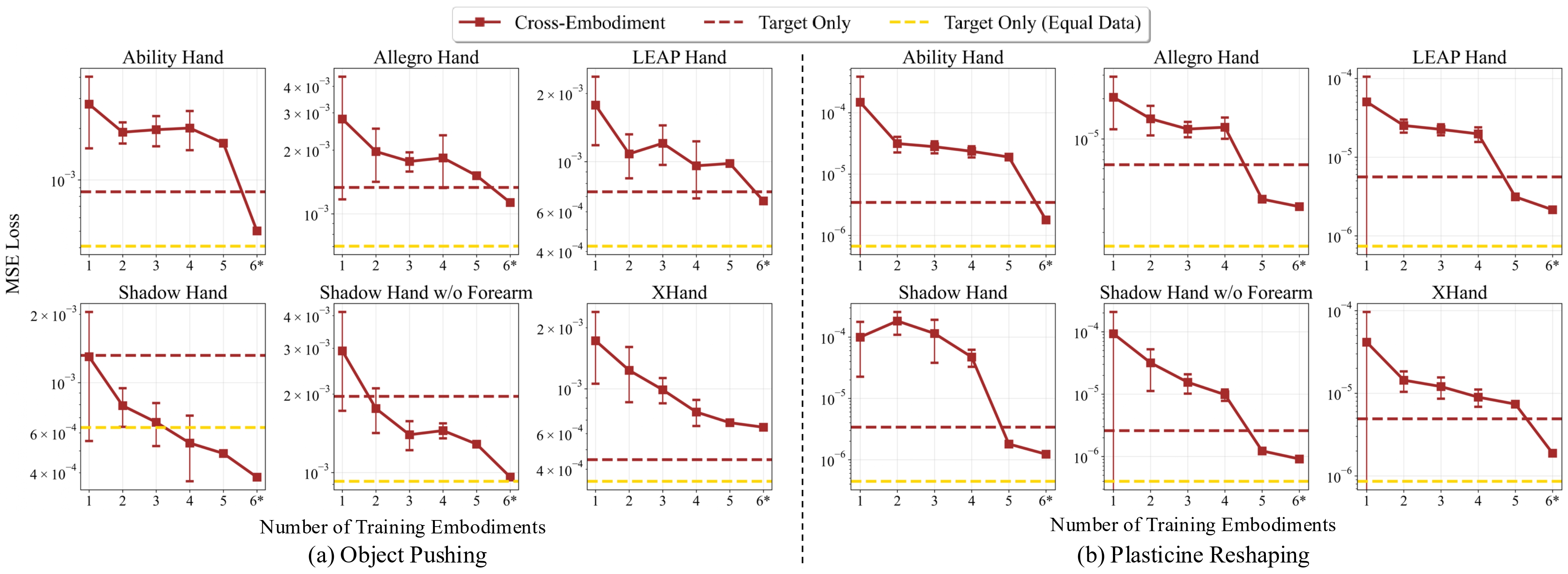

Evaluating Cross-embodiment World Model Learning

We systematically evaluate how the number of training embodiments influences generalization to unseen embodiments. For each target hand, we hold it out and train on x other hands, enumerating all C(N,x) subsets from N=6 total hands. In addition, the case x=6 corresponds to training on all hands, including the target, and provides a reference for the upper bound of cross-embodiment learning in the current data regime.

Key Observations

- Embodiment scaling law: More training embodiments -> better generalization.

- Zero-shot strength at x=5: Performance can surpass direct training on the target embodiment without ever seeing it.

- Benefit of co-training at x=6*: Training on all embodiments outperforms direct training on the target embodiment.

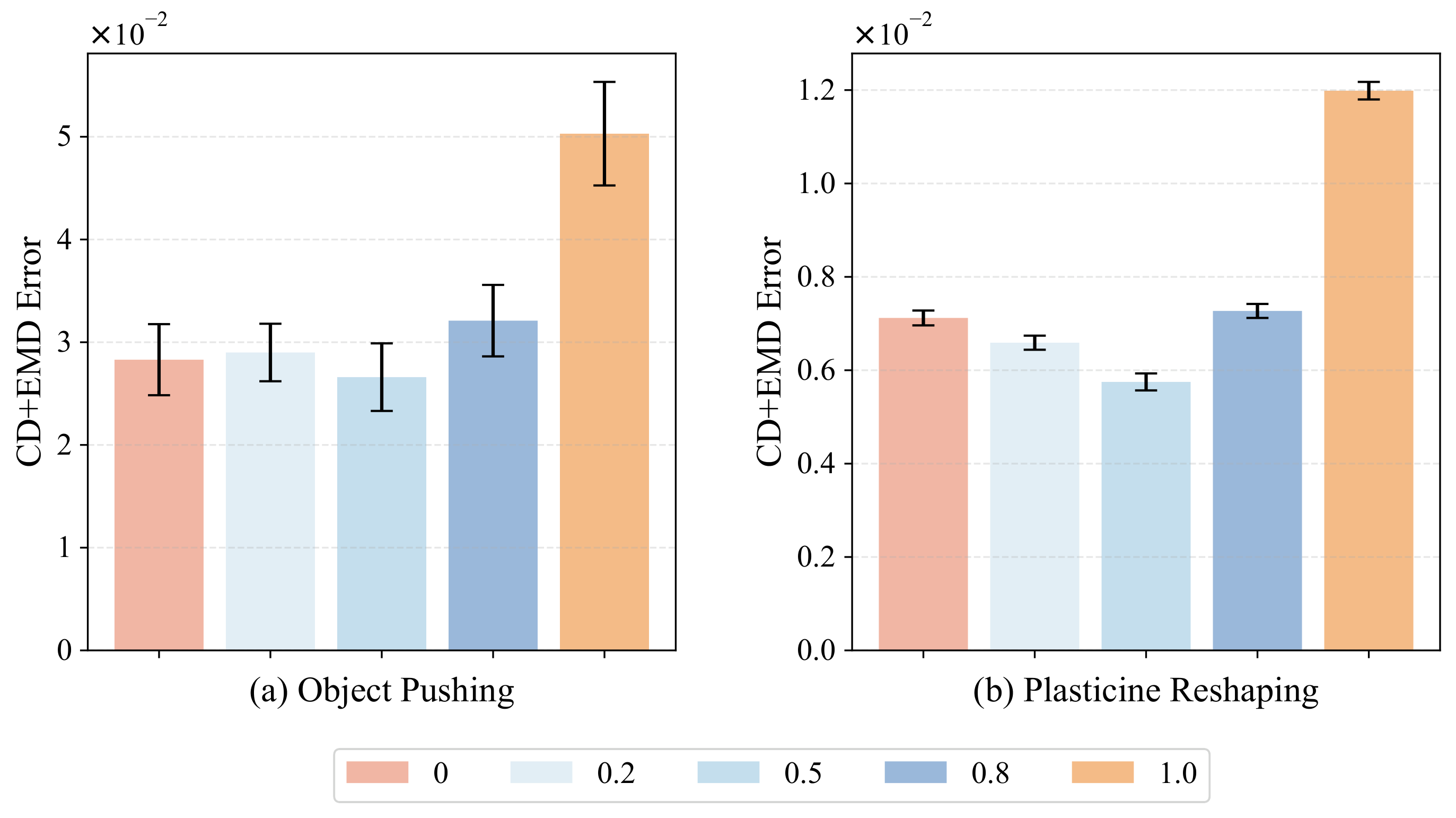

Co-Training Recipe

We co-train a single world model on mixed simulated robot interactions and real human demonstrations, and sweep the amount of simulation data relative to a fixed human dataset. Evaluated on held-out human interactions (CD/EMD error), simulation-only performs worst, human-only is a strong baseline, and a balanced ~1:1 sim-to-human mix yields the best accuracy —suggesting simulation acts as a regularizer rather than a replacement for real data.

Evaluating Model-Based Control

We evaluate the learned cross-embodiment world models for model-based control on real robot hardware. The following videos demonstrate the zero-shot transfer capabilities of our approach across different robotic embodiments (Ability Hand and XHand) with different kinematic structure and action spaces.

All videos are played at 5× speed.

Ability Hand (6-DoF) Results

Ability Hand Reshaping: Letter X

Ability Hand Reshaping: Letter R

XHand (12-DoF) Results

XHand Reshaping: Letter X

XHand Reshaping: Letter R

XHand Reshaping: Letter T

XHand Reshaping: Letter A

Citation

@article{he2025scaling,

title={Scaling Cross-Embodiment World Models for Dexterous Manipulation},

author={He, Zihao and Ai, Bo and Mu, Tongzhou and Liu, Yulin and Wan, Weikang and Fu, Jiawei and Du, Yilun and Christensen, Henrik I. and Su, Hao},

journal={arXiv preprint arXiv:2511.01177},

year={2025}

}